Our subject is one of those peculiar phenomena taken for granted in the contemporary world but which from an historical perspective seem anomalous. The phenomenon is that the huge numbers of Protestants in the United States support almost no distinctively Christian program in higher education other than theological seminaries. Even though over 60 percent of Americans are church members and more than half of them are Protestants and over 55 percent of the population generally say that religion is “very important” in their lives, very few people seem to think that religion is “very important” for higher education. Protestants in America are divided about evenly between evangelical and moderate-liberal. Yet neither group supports any major universities that are Protestant in any interesting sense. They do have a fair number of small liberal arts colleges. Those schools that are connected to mainline denominations tend to be influenced only vaguely by Christianity. The more than a hundred evangelical colleges are more strongly Protestant. Some of these are fairly good colleges. But their total number of students is about the same as that of two state universities. There is almost no Protestant graduate education outside of seminaries.

From the point of view of the churches, it is especially puzzling that both Protestant leadership and constituencies have become so little interested in Christian higher education. When clergy lament that lay people are uninformed, why do they not encourage Christian collegiate education? When laypeople complain that the clergy are often poorly educated, why do they not support colleges and universities that would send some of their best men and women to divinity schools? When religious leaders deplore the spread of alien philosophies, why do they not have any serious interest in graduate education?

This situation is particularly striking in light of the long tradition of Protestant higher education. The Reformation began at a university with a scholar’s insight, and educational institutions long played a major role in Protestant success. Educated clergy were essential to the challenge to Catholic authority, and for centuries in Protestant countries, including the Protestant colonies in America, clergy typically were the best educated persons in a town or village. In this country, until well into the nineteenth century, higher education remained primarily a function of the church, as it always had been in Western Civilization. Most educators were clergymen and the vocation of professor was not clearly differentiated from that of clergy. The history of American higher education is not, of course, strictly Protestant. The Catholic experience in particular presents a significant alternative. Nonetheless, until recently, Protestants and their heirs were overwhelmingly dominant in setting the standards for American universities. If these schools had a soul, in the sense of a prevailing vision or spirit, it had a Protestant lineage.

Until the Civil War era, the vast majority of American colleges were founded by churches, often with state or community tax support. Since higher education was usually thought of as a religious enterprise as well as a public service, it seemed natural for church and state to work hand in hand, even after the formal disestablishment of the churches. Protestant colleges were not only church colleges, but also public institutions. Even the state colleges or universities that were founded after the American Revolution, sometimes with Jeffersonian disestablishmentarian intentions, had to assure their constituents that they would care for the religious welfare of their students. Almost all were or became broadly Protestant institutions, replete with required chapel and often with required Sunday church attendance. As late as 1890, a survey of twenty-four state institutions showed that twenty-two still held either required chapel or voluntary chapel services in university buildings, and four required church attendance. These were just the state schools. Church-related colleges and universities, which still typically had clergy for presidents in 1890, were much more rigorously Protestant.

The peculiarity of the contemporary situation, then, is all the more striking, not only because Protestants have forsaken a long tradition of leadership in Christian higher education, but because they have forsaken it so recently and forgotten it so completely. Even throughout the first half of the twentieth century, American colleges and universities typically showed a concern to support religious practice that most university people today would find unthinkable. Again, we can make the point most strikingly by looking at state institutions. A 1939 survey of state universities and colleges showed that 24 percent still conducted chapel services, and at 8 percent these were required. Over half (57 percent) of the state schools took financial responsibility for special chapel speakers or for religious convocations. Forty percent subsidized voluntary religious groups. Though compulsory chapel was indeed coming to an end in state institutions and some states banned the teaching of theology in their schools, compensatory efforts to support voluntary religion developed elsewhere. Between the world wars, there was a substantial movement to add religion courses, departments, or schools of religion at state schools. The best known of these is the Iowa School of Religion, founded in the 1920s, which balanced Protestantism with representatives of Catholicism and Judaism, but was originally designed to promote the practice of religion and the training of religious leaders, not just the detached academic study of religion. The University of Illinois in the 1920s began allowing qualified campus ministries to offer courses for university credit. Remarkably, the Catholic Newman Foundation still continues this practice, although Protestants abandoned it in the face of widespread faculty opposition in the 1960s.

Once again, we have been talking only about state schools. Religion was more prominent and prominent for longer at most private schools, most of which bad a Protestant heritage. Yale did not drop compulsory chapel until 1926 and Princeton did not abandon it completely until 1964. The University of Chicago, founded as a Baptist school in the 1890s, was intended by its first president, William Rainey Harper, to support a civilization that would be based on biblical principles. Methodists also founded an impressive string of universities, including Duke, Emory, Boston University, Syracuse, Northwestern, Southern Methodist, and the University of Southern California, most of which included divinity schools. When Duke was founded in 1924, its founding document stated that “The Aims of Duke University are to assert a faith in the eternal union of knowledge and religion set forth in the teachings and character of Jesus Christ, the Son of God. . . . “ Until the 1960s, Duke continued to require undergraduates to take courses in Bible. So did Wellesley College, founded as an evangelical college in the 1870s by friends of Dwight L. Moody.

During the first half of the twentieth century, the effort to preserve some prominence for religion at prestige universities was perhaps best symbolized by the building of massive chapels at schools such as Princeton, Duke, and Chicago, or the Harkness Tower at Yale. From another perspective, however, these architectural statements might be seen as final compensatory gestures in the face of the overwhelming secularization that actually was taking over the schools.

Part of the reason why the religious dimensions of American higher education in the first half of the twentieth century have been so thoroughly forgotten is that even by then they bad become peripheral, if not necessarily unimportant, to the main business of the universities. Then, under the heat of new cultural pressures in the 1960s and beyond, most of what was substantial in such religion quickly evaporated, often almost without a trace and seldom with so much as a protest.

So the puzzle is why a Protestant educational enterprise that was still formidable a century ago, and which until then had been a major component of the Protestant tradition, was not only largely abandoned, but abandoned voluntarily. Or in a larger sense, why has Christianity, which played a leading role in Western education until a century ago, now become not only entirely peripheral to higher education but in fact often come to be seen as absolutely alien to the educational enterprise?

When we ask such questions, it is not to suggest that there was a lost golden age to which we should return. From my own point of view of more-or-less traditional Protestantism, the abandonment of interest in Christian higher education by most Protestants is indeed a loss. So is the absence of any substantial place for explicitly Christian perspectives among the various viewpoints represented in academia today. I am convinced that such perspectives are intellectually viable and can and should shape Christian institutions. On the other hand, many Christians would agree that the secularization we are here describing was not all bad. Much of it was the dismantling of a vestigial religious establishment in which a religiously defined social elite imposed formal religious practices on all who would enter the social mainstream. Moreover, the colleges of the nineteenth-century Protestant establishment were typically meager affairs of a few hundred students, hardly comparable to the great universities they often fostered. Whatever their virtues (and they had many), they needed to be changed in substantial ways if they were to survive and serve in twentieth-century settings. Many of the Christian dimensions of the older institutions that were lost were part of tradeoffs that seemed necessary to meet the demands of modernity.

So the story is not simply that of some bad, or naive, or foolish people deciding to abandon one of the most valuable aspects of the Protestant heritage. Rather it is more a tale of some people recognizing serious problems in relating their heritage to the modern world. Whether the results were an improvement, or even coherent, is another question. Nevertheless, educational leaders were responding to some extraordinarily difficult dilemmas, and they cannot entirely be blamed for some of the unintended results of their choices.

What I propose in the present analysis is to emphasize three major sets of forces to which the leadership of emerging universities and their constituencies were responding: first, those having to do with the demands of technological society; second, those having to do with ideological conflicts; and third, those having to do with pluralism and related cultural change. Our understanding of how the current soul of the American universities has been shaped by these forces can then provide a foundation for considering where Christians and other religious people should go from here with regard to mainstream American higher education.

I

The American old-time colleges that dominated the educational scene until after the Civil War retained the outlines of the system of higher education that had prevailed in the Western world for seven centuries. Higher education simply meant expertise in the classics. Students had to show proficiency in Latin and Greek for admission and spent much of their time reciting classical authors. Some students might be only in their mid-teens and the conduct of the schools was strictly regulated on the principle of in loco parentis. Much of the students’ work and daily activities was supervised by a number of tutors, recent graduates usually preparing for the ministry. Professors, who might well be clergymen, also taught a variety of subjects, though they might also cultivate a specialty. Some natural science had been worked into the curriculum as had some small doses of modern subjects, such as political economy. The capstone of the program was a senior course in moral philosophy, taught by the clergyman president. This course applied Christian principles to a wide variety of practical subjects and also was an apology for Christianity, typically based on Scottish common sense philosophy. It would also be preparation for citizenship—a major goal of these colleges. The schools required chapel twice daily, required Bible study, and often required church attendance. These colleges had no real place for scholarship. Theological seminaries, new to America in the nineteenth century, provided the closest thing to any graduate education. Theological reviews were the leading scholarly journals of mid-nineteenth-century America.

Two major pressures combined to bring the collapse of this clerically controlled classicist education by the end of the nineteenth century. One force was the demand for more practical and scientific subjects in the curriculum. This American ideal for education was institutionalized in the Morrill Land Grant Act of 1862, encouraging the state schools oriented toward agricultural and technical education that developed as alternatives to the liberal arts colleges.

The greater force bringing an end to the old classicist colleges was the demand that led to the establishment of universities and graduate education in the decades following the Civil War. Reformers correctly pointed out that for American civilization to compete in the modern world, it would have to produce scholars, and they understood that the amateurism of clerically controlled classicism provided little room for scholarly specialization.

Clerical control of the colleges was thus identified with classicism and amateurism by modern standards. Inevitably, the old-guard clergy defended the system that had helped secure their status as guardians of higher learning. This defense was also inevitably intertwined with defending the Christian character of the old curriculum and of the tightly disciplined collegiate life.

The traditional Christianity of the old guard thus typically came to be cast as the opponent of educational openness, professional progress, and specialized scientific inquiry. Some of the opponents of traditional Christianity made the most of this sudden embarrassment of the old establishment. Andrew Dickson White, founding president of Cornell, for instance, published A History of the Warfare of Science with Theology in Christendom (1896) in which he projected into the past a supposed opposition of dogmatic Christianity to scientific progress. The problem for White was not Christianity per se, but theology, or traditional Christian dogmatism associated with clerical authority.

To reformers it seemed that colleges had to be freed from clerical control, and hence usually from traditional Christianity, in order to achieve something that we take for granted—the emergence of higher education as a separate profession, distinct from the role of clergy. Until this time, although many educators were not clergy, the two positions were not clearly differentiated. Now collegiate education became a distinct profession. And, as was happening with other professions at this time, standards were established that would control membership in the profession. Hence graduate education was widely instituted in the decades following the Civil War, and eventually the Ph.D. became the requirement for full membership in the profession.

Graduate education and accompanying research, which reformers meant to be the real business of the universities, were free of the old collegiate structures and associated Christian controls. Graduate students were older and exempted from disciplines such as chapel and church attendance. Moreover, the natural scientific model for research that dominated the new academic profession proclaimed, as we shall see, the irrelevance of religious belief.

Along with professionalization went specialization. By the 1890s, educators had established professional societies in many of the basic fields such as history, economics, sociology, psychology, and the natural sciences. Prestige in the profession now became dependent on producing narrow specialized studies.

It is important to note that this professionalization was not itself inherently or necessarily anti-Christian. It certainly marked an important step toward the secularization of American schools, but it did not necessarily grow out of ideological antagonism toward Christianity. That is, while it happened to be associated with some antagonism to more traditional Protestantism, which in turn happened often to be associated with the old order, it was not either necessarily or usually associated with antagonism toward Christianity per se. In fact, it was often promoted in the name of a broader, more open Christianity that was now taking more seriously its cultural responsibilities.

This illustrates an important point regarding secularization in the United States. Much of it appeared in a form benign toward Christianity. Secularization in the modern world can be advanced in two major ways—methodological and ideological. (By secularization, I mean simply the removal of some activity of life from substantive influences of traditional or organized religion.) We will consider ideological secularism later; but methodological secularization takes place when, in order to obtain greater scientific objectivity or to perform a technical task, one decides it is better to suspend religious beliefs. Courts of law generally follow this methodology. So do most scientific and technological activities. Most of us, whether strongly Christian or not, approve of such secularization in many cases. We do not want the pious mechanic of our car to tell us that there may be a devil in the carburetor. In the increasingly vast areas of our lives that are defined by technical activities, we expect religion to play, at most, an indirect role.

Christians could therefore readily support many sorts of methodological secularization. Indeed, in the late nineteenth century, the champions of professionalization and specialization in American higher education were themselves predominantly Christians—usually somewhat liberal Christians, but serious practicing Christians nonetheless. For example, Daniel Coit Gilman, the founder in 1876 of America’s first research university, Johns Hopkins, was not, as is sometimes supposed, antagonistic to Christianity. He was, in fact, a serious liberal Christian and in his later years he served as Vice President of the American Bible Society.

Typically, American academics of this era maintained more-or-less separate mental compartments for their religious beliefs and for their academic practices, and they saw the two as complementary rather than in conflict. To pick one of many examples, James Burrill Angell, president of the University of Michigan from 1871 to 1909, was one of the first lay presidents of an American university and pivotal in making Michigan for a time the academic champions of the West. Angell nonetheless maintained that faith and science would harmonize. He remarked, for instance, that “blessed shall be the man . . . who through . . . science and . . . revelation shall learn the one full-orbed truth.” And in 1903, he suggested that faith stands superior in this harmony of faith and reason. “After all, we are not primarily scholars,” he maintained. “Our highest estate is that we are children of the common Father, heirs of God and joint heirs with Christ.”

One implication of this long-standing Protestant view of a harmonious division of labor between science and religion was that it encouraged narrow technical investigation as the model for every field. Since religious truths could stand above and supplement scientific truths, one need not worry excessively about loss of perspective by narrow inquiry. One could always leave the laboratory or one’s social-scientific methodology and attend to higher service to God and humanity.

James Turner, in looking at early developments of the Ph.D. program at Michigan, concludes that in America the scholar was defined as a specialist and hence as not having responsibility to address a broad public. It is important to add that such a narrow definition was initially palatable because it came during a transitional stage, lasting until around World War I, in which scholars typically also had substantial religious affiliations and loyalties, or at least strong religious backgrounds, that provided an impetus for broadly humanistic public expression. Scholarly specialization could be justified by a higher ideal of service.

This two-storied division of labor between scientific technique and religion, or at least high moral ideals, helped foster the development of huge academic territories in which the ideal of free scientific inquiry would be the major operative standard. In Protestant America, such development was easier so long as scholars generally agreed that science and scientific method, however sacred within the professions, was not all there was.

It is one evidence of how sweeping was this establishment of independent territories that the ideal of academic freedom now emerged as the most sacred of all principles within the new academic professions. As historian Richard Hofstadter has documented, the principles of academic freedom had relatively little place in American higher education prior to this century. Rather, as a matter of course, American colleges had been responsible to their boards of trustees, who typically represented outside interests, including religious interests.

Early in this century, however, such outside control of academics came under sharp challenge. The Association of American University Professors, founded in 1915, strongly articulated this critique. In its first statement of principles, the AAUP declared that schools run by churches or by businesses as agencies for propagandizing a particular philosophy were free to do so, but that they should not pretend to be public institutions. Moreover, in addressing “the nature of the academic calling” (as they significantly still put it), the AAUP argued that “if education is the cornerstone of the structure of society and if progressing in scientific knowledge is essential to civilization, few things can be more important than to enhance the dignity of the scholar’s profession. . . . ” Scientific knowledge and free inquiry thus gained near-sacred status.

Such a view of the role of technical scientific knowledge, broadly conceived to include the social sciences and most other disciplines, in effect cleared a huge area of academic inquiry in which religious considerations would not be expected to appear.

Liberal Protestants, who dominated the universities, generally supported such developments. Since the heart of liberalism was its endorsement of the best in modern culture, scientifically based free inquiry, together with its technological benefits, would automatically advance Christian civilization. To build a better civilization was ultimately the Christian mission of the university. Woodrow Wilson, though a moderate in his personal theological views, summarized this agenda in his much noticed speech in 1896, “Princeton in the Nation’s Service,” which set the agenda when Wilson became Princeton’s first lay president in 1902. Wilson is an interesting case, because in the same speech he questioned the over-extension of the scientific ideal to all disciplines. His antidote, however, was more humane humanities and more practical moral activity. So while as president of Princeton Wilson avoided statements about Christian theology that would appear in any way sectarian, he fervently preached morality and service.

Perhaps the outstanding example of a Christian promoting professionalization and technological methodology in the interest of Christian service was Richard T. Ely. Ely was one of the principal organizers of the American Economics Association in 1886 and its first secretary. In the first Report of the AEA, Ely declared that “our work looks in the direction of practical Christianity,” and he appealed to the churches as natural allies of the social scientists. At the University of Wisconsin, where he taught, Ely came under fire in 1894 in one of the early academic freedom cases because his social Christianity, especially his pro-labor stance, offended some of the business supporters of the university.

It was natural, then, that when the AAUP was founded Richard T. Ely should be one of the authors of its General Declaration of Principles. In that Declaration we also find a combination of a faith in the ideal of independent scientific inquiry and the service ideal. In the organization’s description of three major functions of the university, one is “To develop experts for various branches of the public service.” Even as explicitly Christian ideals might be beginning to fade for many Protestant scholars, scientific professionalism still could be justified by its ability to provide “experts in the public service.” In this view, there was nothing to worry about in the advance of independent scientific knowledge. It would still promote virtue.

The theme that ties together all the foregoing developments is the insatiable demand of an emerging industrialized technological society. More than anything else, what transformed the small colleges of the 1870s into the research universities of the 1920s and then into the multi-universities of the late twentieth century was money from industry and government for technical research and development. Universities became important in American life, as earlier colleges had not been, because they served the technological economy, training its experts and its supporting professionals, and conducting much of its research. At least as early as the Morrill Act of 1862, the demand for practicality had been reshaping American higher education. Schools have continued to offer as an option a version of the liberal arts education they traditionally provided; but such concerns and the faculties supporting them have played steadily decreasing roles among the financial forces that drive the institutions. Ironically, while twentieth-century universities have prided themselves on becoming free of outside religious control, they have often replaced it with outside financial control from business and government, which buy technical benefits from universities and hence shape their agendas. Any particular such technological and practical pressure can usually be justified, of course, by the higher cause of service to society, or at least by its profitability. So practically minded Christians, especially those who rather uncritically regarded American government and business as more-or-less Christian enterprises, have readily supported such trends. For instance, they typically want their children to gain the economic and social benefits of such education. Nonetheless, the dominance of these technological forces has expanded the areas of higher education where Christianity would have no substantive impact and where many Americans see it as having almost no relevance.

II

While the pressures toward technical specialization helped push traditional Christian educational concerns to the periphery of universities, support for such methodological secularization, as we have seen, came as often from Christians as non-Christians. Such methodological secularization, however, inevitably proved an important ally of ideological secularism. Christians who for methodological reasons thought that technical disciplines were best pursued without reference to religious faith promoted the same standards for those disciplines as did secularists who believed that all of life was best lived without reference to religious faith.

For our immediate purposes, it may be helpful to oversimplify a great deal by reducing to just three broad categories the ideological contenders for the soul of the American university over the past century and a quarter. First, there was traditionalist Protestantism which was dominant at the beginning of the era, but easily routed by liberal Protestantism, sometimes aided by some version of secularist ideology. Then, from about the 1870s until the 1960s, we have the dominance of a broadly liberal Protestantism which allied itself with a growing ideological secularism to form a prevailing cultural consensus. Since the 1960s, we see the growing of a more aggressive pluralistic secularism which provides no check at all to the tendencies of the university to fragment into technical specialities.

During the early era from the 1870s to about World War I, American colleges and university faculties included relatively few out-and-out secularists or religious skeptics. A few faculty members, however, were frank proponents of an essentially Comtean positivism. Comtean positivism proposed an evolutionary view of the development of human society in three stages. First, there was an era of superstition or religious dominance. Then came an era of metaphysical ideals (which was something like the liberal Protestant era). Finally, there was the promise of an era of the triumph of enlightened science which would free humanity from both superstition and metaphysics and allow it to follow a higher scientifically derived morality.

By the 1920s, such views were being more openly and widely expressed by academics, as is indicated by the influence during that era of John Dewey, who expressed almost exactly the Comtean view. Such views could blend with those of liberal Protestantism because they too promised to liberate American society through science. Arthur J. Vidich and Stanford M. Lyman in their recent study of American Sociology summarize this point in that field:

By the third decade of the twentieth century an anti-metaphysical Comteanism was combined with statistical technique to shape a specifically American positivism which, activated as social technocracy, promised to deliver America from the problems that bad been addressed by the old Social Gospel. . . .

Liberal Protestants and such post-Protestants were on the whole allied during this period. Both agreed that traditional Protestantism was intellectually reactionary, and within only about fifty years, they effected a remarkable revolution that eliminated most traditional Christian views from respectable academia. Both liberal Protestants and secularists used the prestige of evolutionary biology to discredit biblicism and to promote the virtues of a scientifically dominated worldview. Liberal Protestants and secularists furthermore agreed that the scientific age bad brought with it higher-level moral principles that could form the basis for a consensus of values that would benefit all humanity.

Typically, they hoped to find a base for such values in the evolution of Western culture itself. The curricular expressions of this impulse were English literature courses which emerged by the turn of the century and the inventions of the Western Civilization and humanities courses. Western Civ courses dated from the World War I era and eventually were widely adopted throughout the nation. One might wonder why secularist positivists would be among those promoting the humanities. Yet we must remember that positivism always included the promise of a higher morality. Since in its historicist worldview civilization itself was the only source of values, the study of the evolution of Western humanities was an important avenue towards positivism’s ends. Liberal Protestantism promoted a similar outlook, seeing God progressively revealed in the best in civilization.

Such curricular measures can also be seen as major efforts to stem the tide of technological and professional pressures on higher education. With classical requirements collapsing rapidly and formal religion having been pushed to the periphery, the ideals and achievements of the humanities could still provide coherence to the curriculum.

World War II underscored the sense of the importance of education that would pass on the best of Western values. The attacks on broadly Christian and liberal culture from Nazism and fascism on the right and Marxism on the left presented the civilization with a major moral crisis. With traditional Christianity gone as a source for coherence, what else was there? The academic elite typically found the answer in the humane tradition of the West. This view is eloquently stated in the influential Harvard Report of 1945 on General Education in a Free Society. The Report clearly recognizes that “a supreme need of American education is for a unifying purpose and idea.” Furthermore, it suggests frankly that “education in the great books can be looked at as a secular continuation of the spirit of Protestantism.” As the Bible was to Protestantism, so the great books are the canon of the Western heritage that education should pass on. Such a heritage, the Report adds (in a nice reiteration of the Whig tradition), is education for democracy, since it teaches the “dignity of man” and “the recognition of his duty to his fellow men.” Scientific education is part of that heritage, fostering the “spiritual values of humanism” by teaching the habit of questioning arbitrary authority. These ideals may be seen as the last flowering of the Whig-Protestant ideal which, as in the Report, celebrated the harmonies of broadly Protestant and democratic culture.

That the days were numbered when such elite educational ideals might hope to set the standard is suggested by the strikingly different tone of the even more influential 1947 report of The President’s Commission on Higher Education for Democracy. This report was a sort of manifesto for the era of mass education that began with the return of the war veterans, and it indicates the direction that higher education would go once it became essentially a consumer product, largely controlled by government. In the following decades the vast expansion of higher education would take place overwhelmingly at state or municipal schools. Not only did this trend accelerate secularization, it also strengthened the practical emphases in American education. So while the President’s Commission, like the Harvard Report, seeks coherence in a Western consensus, it finds it not in a great tradition, but in a pragmatist stance that assumes current democratic values to be a norm. The report has a thoroughly Deweyan ring to it. “A schooling better aware of its aims” can find “common objectives” in education. The consensus that will emerge embraces both practical education and general education, with the goals of the latter characterized as giving the student “the values, attitudes, and skills that will equip him to live rightly and well in a free society.” These will be “means to a more abundant personal life and a stronger, freer social order.”

Whatever the merits of such ideals, the rise of mass education after World War II made it almost inevitable that the pragmatic approach would triumph over adherence to an elite heritage in establishing a secular consensus. Nonetheless, through the Kennedy years the two approaches could grow side by side, sometimes within the same institutions. Humane liberal arts and practical approaches might be in competition, with the pragmatic gaining ground, yet most American educators agreed that there ought to be an integrative consensus of democratic values. By now the consensus was largely secular and most often defined by persons who were themselves secularists. Nonetheless, this was also an era of mainstream religious revival, and Christians, who were still numerically well-represented on campuses, seldom provided serious dissent from the search for democratic ideals. Since public consensus was the ideal, it was thought best to be low-key, entirely civil, and broadly inclusive about one’s faith. Although openly confessing Christians could play supporting or even mildly dissenting roles in society (Reinhold Niebuhr comes to mind), the essential social ideals that higher education would promote would be defined in secular terms and largely by secularists.

Secularism as an ideology also received support from those who had less lofty reasons to abandon Christian standards. The revolution in sexual mores had an incalculable, but certainly immense, impact on weakening religious ideology and control. Established religion was widely associated with sexual repression. Changing national mores and opportunities presented by coeducation provided immediately compelling motives for ignoring religious issues during one’s college years. Of course, the more spiritually minded often circumvented these issues with a species of methodological secularization. In any case, a history of changes in higher education no doubt could be written from the perspective of questions of sexuality alone.

III

The chief factor hampering Christianity in its response to the above challenges was the coincidence of those challenges with the further problem of the pluralistic nature of modern society.

The Catch-22 for Christians pondering the relationship of religion and public policy in a culturally diverse society is that if Christianity is to have a voice in shaping public philosophy, it seems that equity demands that it do so in a way that gives Christians no special voice. Public justice appears to demand of Christians that they receive no special privilege, but rather provide equal opportunity for all views. In a land where Christians have been culturally dominant and are still the majority, achieving such a public equity seems almost to require that Christianity be discriminated against. Liberal Christians in particular, who defined their mission largely in public terms and made equity a pre-eminent concern, could get caught in stances that would in effect lead to putting themselves out of business.

This dilemma is well illustrated in Protestant institutions of higher learning which, as we have seen, typically aspired to be public institutions as well as church institutions, pursuing the laudable goal of serving the public as well as their own people. The dilemma was not apparent, however, so long as Protestant cultural dominance was presumed. As long as it could be taken for granted that Protestantism was culturally dominant or that it ought to be dominant, the goal of church-related institutions was to shape the whole society according to Protestant standards. This meant not only that Catholics ought ideally to be converted to Protestantism, it also suggested that all Americans should adopt the set of cultural ideals that Protestants espoused. These ideals were classically expressed in the Whig tradition of the mid-nineteenth century. Perpetuating a strain of American revolutionary rhetoric that had its roots in the Puritan revolution in England, Protestants associated political and intellectual freedom with dissenting Protestantism, and monarchism, tyranny, and superstition with Catholicism. The Protestant-Whig ideals included affirmations of scientific free inquiry, political freedom, and individualistic moral standards such as hard work. Nineteenth-century college courses in moral philosophy were part of the effort to set such nonsectarian Protestant standards for the nation.

By the end of the nineteenth century, however, nonsectarianism was beginning to have to be defined to include more than just varieties of Protestantism. Exclusivist Protestant aspects of the outlook were becoming an embarrassment in an increasingly diverse society. State schools felt the pressures first, but very soon so did any schools, especially prestige schools, that hoped to serve the whole society. In the decades from the 1880s until World War I such schools rapidly distanced themselves from most substantive connections with their church or religious heritages, dropping courses with explicit theological or biblical reference and laicizing their boards, faculties, and administrations.

At the beginning of the period, one important way of demonstrating that a nationally oriented school was “nonsectarian” was to avoid any formal creedal test for faculty. Major older schools, however, were still often hiring their own alumni, and old-boy networks could informally ensure some sympathy to religious traditions. Old-time college presidents also bad substantial discretionary powers in hiring and could select persons of “good character.” By the end of the century, however, such informal faculty screening for religious views was breaking down, though there remained some discrimination against Catholics and a great deal against Jews. Anyone with Protestant heritage, however, could gain a position regardless of his religious views or lack thereof. Once this pattern developed, it was only a matter of time before religious connections were dismantled.

How rapidly or bow thoroughly this disestablishment took place depended, as I have been suggesting, on how public the institution took itself to be. Catholic schools and smaller sectarian colleges could retain exclusivist aspects of their heritages without difficulty. Prospective students knew that if they applied to a Notre Dame or a Concordia College they were choosing a church school with church standards. Some mainline colleges, such as a Westminster, a Bucknell, or a Davidson, could maintain a strong church identity during the first half of the twentieth century so long as they were willing to remain small and somewhat modest. But what of a Chicago, Yale, or Princeton that aspired to be a major culture-shaping institution? Was there any way they could remain substantially Christian?

Again, the liberal Protestantism that dominated most major American colleges and universities in this era offered a solution. Essentially, this solution amounted to a broadening of the old Whig heritage. The white Protestant cultural establishment could retain its hegemony if the religious heritage were so broadly defined as to be open to all opinions, at least all liberal opinions. As we have already seen, liberal Protestantism’s two-level approach to truth allowed the sciences and the professions to define what actually was taught at universities, to which higher religious and moral truths could be added as an option.

To respond to pluralism and to retain hegemony, the specifically religious dimensions of Protestantism had to be redefined. By the early decades of the century, exclusivist elements of the heritage were abandoned and Christianity was defined more or less as a moral outlook. It promoted good character and democratic principles, parts of the old Whig ideals palatable to all Americans. So prestige universities, virtually all administered by men of Protestant heritage, could continue to promote the melting pot ideal of assimilation of all Americans in a broad moral and political consensus.

Even this solution presented certain problems, among them attitudes toward prospective Jewish students and faculty. Jewish immigration increased dramatically in the decades around the turn of the century, and Jewish university applications increased more. By the 1920s, despite some protests, most of America’s prestige schools had set up quotas, often of around 15 percent, so as not to be overwhelmed with Jewish students. Sometimes they still cited the “Christian” ethos that they were ostensibly preserving, even though Christian teaching as such had disappeared. In many leading American schools Jewish faculty were discriminated against or excluded, especially in the humanities, until after World War II.

This example points out the larger problem. Could Protestants directing culturally leading institutions legitimately discriminate in any way against people from other traditions? Especially after World War II and the Holocaust, such issues became acute for American educators of Protestant heritage. The Whig-democratic ideals they had long proclaimed included, after all, the principles of equity and integration of all peoples that cultural outsiders were now claiming.

By now the answer to the puzzle with which we began this essay should be becoming apparent. Why did Protestants voluntarily abandon their vast educational empire and why are they even embarrassed to acknowledge that they ever conducted such an enterprise? The answer is that they were confronted in the first place with vast cultural trends such as technological advance, professionalization, and secularism that they could not easily control; and their problem was made the worse by pressures of cultural pluralism and Christian ethical principles that made it awkward if not impossible for them to take any decisive stand against the secularizing trends.

Although recognition of principles of equity came into play, I should perhaps not make the decisions involved sound so exclusively high-minded. An alternative way of describing what happened is that eventually the constituencies of schools—whether faculty, students, alumni, or other financial supporters—would not stand for continuing Protestant exclusivism. Such groups combined principles of tolerance with the self-interest of those in broadening constituencies that were not seriously Protestant. The most formidable such outside pressure came, for example, from the Carnegie Foundation early in this century when it established the college retirement fund that eventually became TIAA/CREE. Initially the foundation made it a condition for colleges participating in the program that they be nonsectarian. Other business contributors, as well as state legislatures, made similar demands. Administrators, who have been said to be able to resist any temptation but money, clearly had a good deal of self-interest in recognizing the values of pluralism and disestablishment.

Nonetheless, even if self-interest served mightily to clarify principle, disestablishment seemed from almost any Christian perspective the right thing to do.

One important encouragement to such disestablishment was that it could be justified on the grounds that voluntary religion was in any case more healthy than coerced religion. This was a strong argument raised against required chapel services, and almost invariably schools abolishing the requirement went through a transitional era of voluntary chapel attendance, which often flourished for a time. Moreover, during the decades around the turn of the century when formal disestablishment was taking place at the fastest rate, voluntary Christianity on campuses was probably at its most vigorous ever. College administrations often encouraged YMCAs and YWCAs, which acted much as InterVarsity Christian Fellowship does today. Students of the era helped organize the Student Volunteer Movement, under the initial sponsorship of Dwight L. Moody, and spearheaded the massive American foreign missionary efforts that reached their peak around World War I. Administrations also often encouraged Protestant denominational campus groups as well as their Catholic and Jewish counterparts. As long as such activities, however peripheral to the main business of universities, were available to the minority of students who might be interested, and as long as the religious programs at least modestly flourished (as they did through the 1950s), disestablishment could be seen as a reasonable accommodation to pressures for change.

This solution also fit the widely held view that science, which provided the ultimate guidelines for intellectual inquiry, and religion could operate in separate but complementary spheres. The division of labor between universities and theological seminaries, where professional religious training was still available, institutionalized the same principle. At some private universities, this differentiation was instantiated by the continuance of divinity schools, which made other disestablishment of Christianity at those institutions seem less threatening.

Whatever all the good and compelling reasons not to resist disestablishment, something seems wrong with the result, if viewed from a Christian perspective or in terms of the interests of Protestant churches and their constituencies. The result that today Christianity has only a vestigial voice at the periphery of these vast culture-shaping institutions seems curious and unfortunate from such perspectives.

One must ask then how it is that if Protestant leaders in higher education generally made the right—or at least virtually inevitable—decisions, what has gone wrong that the outcome should be so adverse to the apparent interests of Protestant Christianity?

I think the answer lies in some assumptions deeply embedded in the dominant American national culture that Protestantism had so much to do with shaping. These are simply the assumptions, already alluded to, that there should be a unified national culture of which Protestant religion ought to play a leading supportive part. At the time of the original British settlements in the seventeenth century, Christianity was presumed to provide the major basis for cultural unity. In the eighteenth century, when the colonies formed into a nation. Enlightenment ideals provided a less controversial scientific basis for a common culture, but Protestantism played a significant supportive role, especially in its nonsectarian guise.

Given such an attractive goal as a unified national culture, it was natural that the way in which dominant American Protestants would deal with cultural diversity was to attempt to absorb it. This was, in a sense, what the Civil War was about—a wrenching episode that illustrates both the virtues and the dilemmas of seeking nationally unified moral standards. In the next century, as increasing ethnic and religious diversity became impossible to ignore, the ideal became more vaguely Christian or Judeo-Christian and was referred to simply as “democratic.” Through the Kennedy era, however, the ideal remained consensus and integration. The civil rights campaign and efforts to integrate blacks into the mainstream seemed to underscore the moral correctness of this strategy.

The intellectual counterpart to this strategy was the belief, supported by the assumptions of the Enlightenment, that science provided a basis for all right-thinking people to think alike. This view was especially important in the United States, since Enlightenment thought had so much to do with defining national identity. While there might be disagreement as to whether Christianity was integrative or divisive, scientific method was supposed to find objective moral principles valid for the whole race. Dominant Christian groups joined in partnership with science in underwriting this integrative cultural outlook. By the twentieth century, as traditional Christianity appeared too divisive to be an acceptable public religion and liberal Christianity began to drift into a grandfatherly dotage of moralism, scientific method emerged as the senior partner in the integrative project of establishing a national culture.

For American education this meant the continuing assumption that a proper educational institution ought to be based on an integrative philosophy. There was, of course, some internal debate as to what that philosophy should he or how it should be arrived at, but so long as proponents could claim something of the sanction of the scientific heritage, they could present their outlooks, not as one ideology among many, but as ones that were fair to everyone, since they were, if not wholly objective, at least more or less so.

As attractive as all this might seem and as necessary as it might be to cultivate some common culture, there were several things wrong with the universalist assumptions on which these integrative ideals were based. First, they were socially illusory, since America was not just one culture, but a federation of many. Second, the universalist views were intellectually problematic. Not all right-thinking people thought the way white Protestants did, and there was also no universal objective science that all people shared. Rather, science itself took place within frameworks of pre-theoretical assumptions, including religiously based assumptions. Finally, however attractive and plausible pursuit of this integrative cultural enterprise might be, it was not an enterprise to which Christians could forever fully commit themselves if they wanted to retain their identities as Christians. Christianity, whatever else it is, is not the same as American culture, and hence it cannot be coextensive with its public institutions. As we have seen, liberal Protestants during the first half of the twentieth century dealt with this problem not by sharpening their identity over against the culture, as did fundamentalist and Catholic intellectuals, but rather by blurring their identities so that there was little to distinguish them from any other respectable Americans. Hence, until the 1960s, they could continue to control America’s most distinguished academic institutions. Only if there had been a strong sense of tension between Christianity and the integrative American culture—a tension that was embryonically suggested by neo-orthodoxy but never substantially applied to challenge the idea of a culturally integrative science—might there have been a search for radical alternatives. But a strong sense of such tensions was not a part of liberal Protestantism.

IV

This analysis of the problematic assumptions of dominant American culture and religion should help us to understand and evaluate what has happened to universities since the 1960s, including the demise of the old liberal Protestant establishment. Especially intriguing is the paradoxical character of the major contenders to fill the establishment’s place.

By the mid-sixties, two forces were converging to destroy the secularized liberal Protestant (or now secularized Judeo-Christian) Enlightenment consensus through a sort of pincer action.

First was the triumph of mass education with its ideal of practicality. I need not detail this; but clearly it tended to destroy any real consensus in the universities, substituting for it a host of competing practical objectives. Such trends were reinforced by ever-increasing percentages of university budgets being drawn from government and business research grants, which moved the center of gravity away from the humanities. The more universities promote technical skills, the more they fragment into subdisciplines. Such tendencies are reinforced by the ongoing impetus of professionalism. For faculty, loyalty to one’s profession overwhelms loyalty to one’s current institution. The research necessary for professional advancement often subverts interest in teaching. Many observers have commented on these trends.

At the same time came the attack on the other flank from the counterculture, questioning the whole ideal of a democratic consensus and of the moral superiority of the now-secularized American Way of Life. An essential element in this attack was the critique of “the myth of objective consciousness,” which pointed out the links among the establishment’s claims to legitimacy, its tendency to submit to depersonalizing technological forces, and its Enlightenment heritage of scientific authority.

By this time, the old establishment had few grounds on which to answer such challenges. Its members had long since given up any theological justification for their views and the claims to scientific authority were already weakened by the many internal critiques of the myth of objectivity. Moreover, in practical terms scientific authority pointed in too many mutually exclusive directions to be of much use. The establishment’s most plausible defense seemed to be to appeal to self-evident moral principles shared throughout the culture. Now, however, the high moral ground of liberty, justice, and openness had been captured by those who interpreted those terms in ways decidedly more radical than the establishment had ever conceived.

The result was that the old liberal (and vestigially liberal Protestant) consensus ideal collapsed. Although it lingers on among the older generation, its authority has been largely undermined by the forces that challenged it. On the one hand is the increasing growth of practical disciplines and subdisciplines that do not concern themselves with the big questions but engage in technical research or impart technical skills. The immense growth of the business major, which threatens to replace the humanities as the major alternative to natural science, is part of the trend. This may be seen as the triumph of methodological secularization, a force that had long threatened to take over the universities and the culture and was now largely unchecked by any competing humanities ideology.

At the same time, attempting to fill the ideological vacuum left by the decline of the old liberal-Protestant consensus is aggressive pluralistic secularism, growing out of the 1960s and flourishing as students of the 1960s become the tenured scholars of the 1980s and 1990s. In the name of equality and the rights of women and minorities, this group questions all beliefs as mere social constructions, denigrates what is left of the old consensus ideology, attacks the Western-oriented canon, and repudiates many conventional ethical assumptions.

V

In the context of all these forces, we can understand the residual formal role left for religion in universities. Clearly, despite the presence of many religion departments and a few university divinity schools, religion has moved from near the center a century or so ago to far on the incidental periphery. Aside from voluntary student religious groups, religion in most universities is about as important as the baseball team.

Not only has religion become peripheral, there is a definite bias against any perceptible religiously informed perspectives getting a hearing in university classrooms. Despite the claims of contemporary universities to stand above all for openness, tolerance, academic freedom, and equal rights, viewpoints based on discernibly religious concepts (for instance, that there is a created moral order or that divine truths might be revealed in a sacred Scripture), are often informally or explicitly excluded from classrooms. Especially offensive, it seems, are traditional Christian versions of such teachings, other than those Christian ethical teachings, such as special concern for the poor, that are already widely shared in the academic culture.

Conservative Christians often blame this state of affairs on a secular humanist conspiracy, but the foregoing analysis suggests that such an explanation is simplistic. Though self-conscious secularism is a significant force in academic communities, its strength has been vastly amplified by the convergence of all the other forces we have noted. Liberal Protestantism opposed traditional Christian exclusivism and helped rule it out of bounds. Methodological secularization provided a non-controversial rationale for such a move, reinforced by beliefs concerning the universal dictates of science. Concerns about pluralism and justice supplied a moral rationale. Moreover, to all these forces can be added one I have not discussed separately (though it may deserve a section of its own), the widely held popular belief, sometimes suggested in the courts but not yet consistently applied, that government funding excludes any religious teaching. With an estimated 80 percent of students today attending government-sponsored schools, this force alone is formidable.

We can see more specifically how religiously informed perspectives have fared in the university if we look briefly at the development of the actual teaching of religion in twentieth-century university curricula. During the era of liberal Protestant hegemony it became apparent that the forces of secularization had left a gap in which religion was not represented at all in the curriculum. To counter this, an influential movement developed between the World Wars to add courses in religion, some Bible chairs, some religion departments, and a few schools of religion, even at state schools. These were originally designed not only to teach about religion but also to train religious leaders and, at least implicitly, to promote religious faith. At state schools, at least, efforts were made to represent Catholicism and Judaism as well as Protestantism.

Such efforts were not always academically strong and did not have a major impact on university education. Nonetheless, they were substantial enough for Merrimon Cuninggim to conclude in a study conducted on the eve of World War II that “religion is moving once more into a central place in higher education.” Moreover, the war brought religious revival and widespread concern about the moral and religious basis for Western Civilization and so enhanced the role of religion in the undergraduate curriculum. The revival and the prominence of some broadly neo-orthodox scholars also enhanced the prestige of university divinity schools, which long had been educating ministers and now were beginning to be seen as significant centers for graduate education in religion. Religion during this era was seen largely as involving ethical concern, and hence as constituting one of the humanities. Its place in the universities was justified by its contribution to helping define the moral mission of the university to modern civilization.

By the end of the 1960s, however, the character of religious studies in the universities had begun to change significantly. The number of religion departments increased dramatically, so that by the 1970s almost every university had one. However, the rationale for such departments had now largely shifted from being an element of the humanities—with an essentially moral purpose—to being a component of the social sciences. Correspondingly, religion departments hired fewer persons with clerical training and increasing numbers with scientific credentials. Practitioners of religious studies who flooded sessions of the American Academy of Religion now often employed their own technical language, which served to legitimate the discipline’s status as a science. The new studies of the history of religions fit in well with the growing enthusiasm for pluralism in the universities. Religion departments increasingly gained legitimacy by focusing their attention on the non-Western, the nonconventional, and the (descriptively) non-Christian.

Such developments also provided impetus toward the growing popularity of a normatively non-Christian stance among practitioners of religious studies. Here I have to rely on impressionistic evidence and do not want to attribute larger trends simply to a secularist conspiracy. Nevertheless, my impression is that many religious studies programs are staffed by people who once were religious but have since lost their faith. Like most teachers, they hope that their students will come to think as they do, so that a goal of their teaching becomes, in effect, to undermine the religious faith of their students. In this pursuit they are aided by methodological secularization, which demands a detachment from all beliefs except belief in the validity of the scientific method itself. So a history-of-religions approach that suggests that the only valid way to view religions is as social constructs intentionally or unintentionally undermines belief in any particular religion as having divine origins. Of course, such negative impact of religious studies on religious faith is mitigated by many other persons in the discipline who entered the field as an extension of their religious calling and whose more positive perspectives are apparent despite pressures not to reveal any explicit religious commitment.

Those who oppose any visible commitment, however, hold the upper hand, whether because of lingering beliefs in scientific objectivity, concerns over pluralism, or alleged legal restrictions. I have even heard the suggestion that no person who believes a particular religion should be allowed to teach about it. Although this proposal is not, I think, anyone’s actual policy, one cannot imagine it even being suggested about women’s studies or black studies. It is rather like saying that at a music school no musicians should teach. And it is doubtful whether this rule would be proposed regarding a Hindu or a Buddhist. The more common rule, of course, is that in the classroom all evidence of belief must be suppressed, which means in effect that operative interpretative perspectives of believers must be kept hidden from students. Again, this rule is most consistently applied regarding traditional Protestant or traditional Catholic belief. Liberal Protestants may still be advocates if their religious expressions are largely confined to an ethic of political progressivism. Non-theists may openly express their views concerning theism.

Part of the problem, of course, is that in the field of religion we are still dealing with the vestiges of a cultural establishment. Hence it is to some extent understandable that any Christianity that implies some exclusivism should come in for special attack, since it so long has been used to support special privilege.

In any case, the presence of religion programs in universities is, on balance, not a countervailing force to the secularization of universities that we have described. It is especially ironic that their presence is sometimes used to assure pious legislators or trustees that religion is not being neglected at their universities. Religion may not be neglected, but its unique perspectives, especially those of traditional Christianity, are often excluded and even ridiculed.

VI

So what attitude should those of us who are seriously Christian in academically unpopular ways take toward contemporary university education? Should we attempt to have our distinct intellectual perspectives heard, or must we simply give way before overwhelming trends, perhaps living off the residual capital of the old establishment or finding refuge at university divinity schools? (These latter, I might add, especially those that try in some way to be distinctly Christian, appear increasingly anomalous in the overall picture.)

Clearly, it seems to me, it would be impossible for us to return to the days of a Christian consensus, liberal or conservative, even if we wanted to. Realistically, there is no way to reestablish in public and prestige private universities anything resembling even a broad Judeo-Christian moral consensus. At least we could not call it that. Reactions against its identification with Western culture are too strong. Moreover, religious conservatives and liberals have insurmountable disagreements as to what any such consensus might look like.

So what alternative is there other than to continue doing our jobs and allowing the trends that have been building for the past century to run their course? How long will it take for distinctive Christian perspectives, other than those provided by voluntary campus organizations, to disappear entirely from America’s leading universities?

Clearly it would take another major essay to propose adequate alternatives. Nonetheless, it is worth pointing out that there are two major strategies available.

The first is for seriously religious people to campaign actively for universities to apply their professions of pluralism more consistently.

I think we can clarify this proposal if we first notice a revealing feature of some of the post-1960s university pluralism. On the one hand, one of the most conventional ideas of campus pluralists is that all moral judgments are relative to particular groups; at the same time, many of these same people insist that within the university their own moral judgments should be normative for all groups. In a sense what is happening is that the post-1960s, postmodernist generation so influential in contemporary academia is falling into the same role played by the old white Protestant male establishment. Despite their rhetoric of pluralism and their deconstructionist ideologies, many in practice behave as though they held Enlightenment-like self-evident universal moral principles. As with the old champions of liberal consensus, they want to eliminate from academia those who do not broadly share their outlook. In fact, their fundamental premise that all truth claims are socially constructed is not far removed from that of old-style liberal pragmatists. Like the pragmatists, who also thought they were attacking the Enlightenment, they in practice need to presume a more universal moral standard in order to operate. For old-style pragmatist liberals such often unacknowledged standards were the principles of modem Western democracy. For the postmoderns a more broadly inclusivist world-oriented moral absolutism is substituted.

My proposal would be to try to eliminate this anomaly. It seems to me that, so far as public policy is concerned, some version of pragmatic pluralism is indeed the only live academic option. It is deeply ingrained in the American tradition and it is difficult to envision a viable alternative. My only proviso is that it ought to be challenged to be more consistently pragmatic and pluralistic. If in public places like our major universities we are going to operate on the premise that moral judgments are relative to communities, then we should follow the implications of that premise as consistently as we can and not absolutize one, or perhaps a few, sets of opinions and exclude all others. In other words, our pluralism should attempt to be more consistently inclusive, including even traditional Christian views.

This suggestion for a broader pluralism does not mean that it was a mistake for those who have managed our universities, whether Christians, advocates of the Enlightenment, old liberals, or post-1960s pluralists to seek some working consensus of shared values. Even decontructionists, it appears to me, cannot do without some such consensus. Rather, without illusions that our worldview will be shared by all others, we can nonetheless look for commonalties in traditions that can be shared. In fact, there are more such commonalties than we might imagine, since persons living in the same era share many of the same experiences. For instance, a common consensus has developed across most American communities that women and minorities should not be discriminated against in higher education. It makes sense to build university policy on such widely shared principles.

Once we get this far, however, the question is what, if anything, do we exclude? Clearly not all views are permissible, and certainly some practices such as sexual or racial harassment are appropriately excluded. However, if we are serious about recognizing that we should not expect all communities to share our own moral judgments, we will not absolutize the views of the majority. Rather we will permit expressions of a wide variety of responsibly presented minority opinions, including some very unpopular ones.

Advocating such a broader pluralism does not imply that Christians should be moral relativists. In fact, we can believe, as the Jewish and Christian traditions have always held, that true moral laws ultimately are creations of God, whatever approximations or rejections of them human communities may construct. Nonetheless, we may also hold that so far as public policy is concerned in a pluralistic society, justice is best served by a Madisonian approach that thwarts the tyranny of the majority.

Especially in universities, which of all institutions in a society should be open to the widest-ranging free inquiry, such a broader pluralism would involve allowing all sorts of Christian and other religiously based intellectual traditions back into the discussion. If I interpret the foregoing history correctly, almost all the rationales for why such viewpoints were excluded (those having to do with disestablishment and beliefs in universal science) no longer obtain. The only major ongoing factor is concern for justice in a pluralistic society, but that concern would seem now to favor admission of religious perspectives.

Regaining a place for religiously informed perspectives will require some consciousness raising comparable to that which has come from other groups who have endured social exclusion. Nonetheless, I think it is fair to ask whether it is consistent with the vision of contemporary universities to discriminate against religiously informed views, when all sorts of other advocacy and intellectual inquiry are tolerated.

Of course, there would have to be some rules of the game that would require intellectual responsibility, civility, and fairness to traditions with which one disagreed. Nonetheless, it would seem to me to be both more fair and more consistent with the pluralistic intellectual tenor of our times if, instead of having a rule that religious perspectives must be suppressed in university teaching, we would encourage professors to reveal their perspectives, so that they might be taken into account. So, for example, if a Mormon, a Unificationist, a Falwell Fundamentalist, or a Harvey Cox liberal were teaching my children, it would seem to me that truth in marketing should demand that they state their perspective openly. The same rule should apply to all sorts of secularists.

Perhaps what post-Enlightenment universities, which presumably recognize that there is no universal scientific or moral vision that will unite the race, most need to do is to conceive of themselves as federations for competing intellectual communities of faith or commitment. This might be more difficult for American universities than for British or some Canadian universities, which have always seen themselves as federations of colleges, among which there was often diversity. American schools, by contrast, were shaped first by sectarian Christian and then by Enlightenment and liberal Protestant ideals that assumed that everyone ought to think alike. Nonetheless, if American schools would be willing to recognize diversity and perhaps even to incorporate colleges with diverse commitments, whether religious, feminist, gay, politically liberal or conservative, humanist, liberationist, or whatever, pluralism might have a genuine chance to thrive. The alternative seems to be to continue the succession of replacing one set of correct views with a new consensus that is to be imposed on everyone—which is not pluralism at all.

The other strategy, which may be more realistic, is that serious Christians should concentrate on building distinctly Christian institutions that will provide alternatives to secular colleges and universities. Perhaps the situation in the universities and in the academic professions that staff them is hopeless and irreversible. If so, Christians and other religious people should view the situation realistically and give up on the cultural illusion that serious religion will just fit in with the common culture.

Here I am thinking most immediately of building research and graduate study centers in key fields at the best institutions in various Christian subcultures. Such efforts would require some sacrifice of academic prestige, at least temporarily, and hence some sacrifice of possible influence in the wider culture. Nonetheless, if churches do their jobs well in higher education, they are likely to produce communities that are intellectually, spiritually, and morally admirable. They may not be widely liked in the broader culture, but being well liked by the culture has never been one of the gospel promises. Perhaps, given the historical developments we have observed, it is time for Christians in the postmodern age to recognize that they constitute an unpopular sect.

It is incumbent on seriously religious people in mainline educational institutions who do not like this sectarian alternative to suggest a better option.

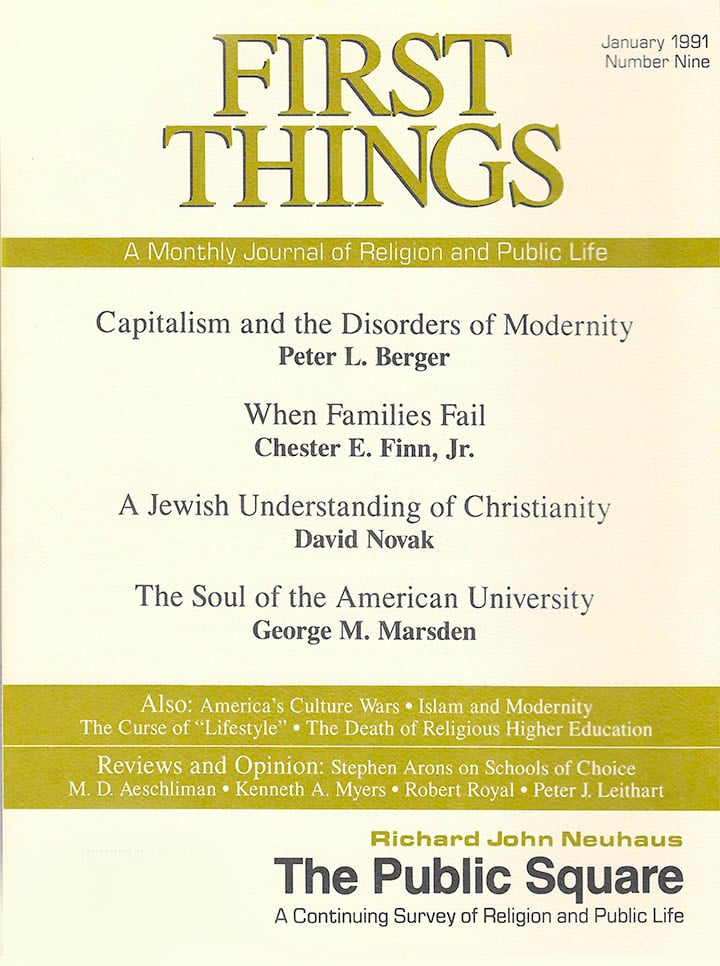

George M. Marsden teaches in the Divinity School at Duke University and is the author of Fundamentalism and American Culture: The Shaping of Twentieth-Century Evangelism, 1870-1925.

The Wrongness of Human Death

Decay and death seem built into the structure of physical creation. Even if there could be a…

Justice for Alito (ft. Mollie Hemingway)

In the latest installment of the ongoing interview series with contributing editor Mark Bauerlein, Mollie Hemingway joins…

Climbing and Death

During the last year or so, I’ve worked on a memoir. The topic is my youth spent…